Human-

-in-the-Loop

Ethical integration of AI

to enhance human decisions in football

Artificial intelligence (AI) is changing how we work, think, and decide across almost every industry—and football is no exception. But with that transformation comes responsibility. In a sport driven by instinct, emotion, and relationships, the way we integrate AI can’t just be about what’s possible, It has to be about what’s right.

The idea of “human-in-the-loop” (HITL) became clearer to me while taking Google’s AI Essentials course. It’s a design principle that places people at key stages of an AI system’s lifecycle—during data collection, training, interpretation, and decision-making. The goal isn’t to slow things down. It’s to stay involved. To ensure AI supports human judgment instead of replacing it.

That same principle kept coming up on Pioneers of AI, a podcast I often turn to for perspective. Across interviews with some of the field’s most forward-thinking voices, one message stood out: AI should empower humans—not override them.

But in football, HITL isn’t just a technical framework. It’s a mindset. A cultural commitment. A way of making sure that the people closest to the pitch still shape the decisions that matter.

I thought about this recently while speaking with a scout from a top club in europe, using an AI-powered system to help filter player options based on positional KPIs—press resistance, progressive passes, expected threat, that kind of thing. He told me about a moment that stuck with him. The system had flagged several midfielders as high-potential. But one name he liked wasn’t on the list at all. The model just didn’t rate him—low stats, low impact. Not worth further review.

But the scout had seen him live. He remembered how the player moved, how he shaped passing lanes even without touching the ball. He couldn’t quite explain it in data terms—but he knew it mattered. So he pushed back. Asked to pull match footage. Advocated for a second look. Two weeks later, that player was training with the first team.

That moment—the decision to question, to look again, to stay curious—is what “human-in-the-loop” really looks like in practice. Not about resisting AI. Not about handing over control. It’s about staying engaged. Asking better questions. Keeping human perspective at the center of the process.

What Is Ethical AI, Really?

We throw around the word “ethics” a lot, but what does it actually mean in practice? For me, it’s less about checklists and policies—and more about asking better questions like:

- What’s fair?

- What’s responsible?

- Who could be impacted by this decision?

- And who gets left out of the loop?

Ethics isn’t just about doing no harm. It’s about being aware of the power you hold—and how that power affects other people’s futures. Especially in football, where a player’s career trajectory can shift with a single data point or a missed opportunity.

And AI doesn’t just reflect our decisions—it amplifies them. At speed. At scale. Often invisibly.

So we have to be deliberate. Because when you let a model decide who gets shortlisted, who gets scouted, or even who gets benched—it’s not just a technical choice. It’s a human one. And it comes with consequences.

That’s why ethical AI can’t be an afterthought. It has to be built into the system from the start—with transparency, with accountability, and with an understanding that not everything that shapes performance shows up in the data.

Vídeo de Google DeepMind: https://www.pexels.com/pt-br/video/18069701/

Vídeo de Google DeepMind: https://www.pexels.com/pt-br/video/18069701/

The Judgment Dilemma

Every new technology promises to improve performance. But it often does so by shifting the center of gravity—away from people, and toward systems.

We see it far beyond football. In education, healthcare, hiring, and justice—decisions are increasingly shaped by algorithms, dashboards, and predictive models. We’re told these tools make things smarter, faster, fairer. But too often, they make them less human.

The logic is familiar: prioritize efficiency. Scale everything. But when scale becomes the goal on itself, something quieter starts to erode—our ability to see the individual, and to take responsibility for what we overlook.

Football might seem like a small corner of this broader trend. But it reflects the same tension. And it gives us a unique opportunity to choose differently.

We’ve already seen how tools like Veo cameras or Hudl’s video systems have transformed how teams capture and review training sessions. Where someone once stood on the sidelines with a handheld camera, now footage is automatically recorded, stored, and clipped. It’s efficient. It saves time. And it frees up human capacity for more complex work.

But with every layer of automation, something shifts. When tagging becomes fully automated—when AI starts deciding what’s important, which moments to highlight, what patterns to flag—it begins to shape not just what we see, but how we think. What we prioritize. What we miss. And that’s the dilemma! Because if we rely only on what the algorithm presents, we risk losing the habit of asking our own questions. We stop noticing what’s not in the frame.

We’ve talked already about the human-in-the-loop as a mindset. In football, it’s what protects the game’s interpretive layer—the part that doesn’t show up in spreadsheets. The part where instinct matters. Where experience adds meaning. Where presence makes the difference. A model might say two players had identical passing stats. But one passed under pressure. The other played safe. That’s often not in the numbers—but almost every coach sees it.

The Limits of Data and the Magic of Football

I learned to understand data—and over time, I learned to love it. I believe in it. And in most cases, it helps me make better—or at least more informed—decisions.

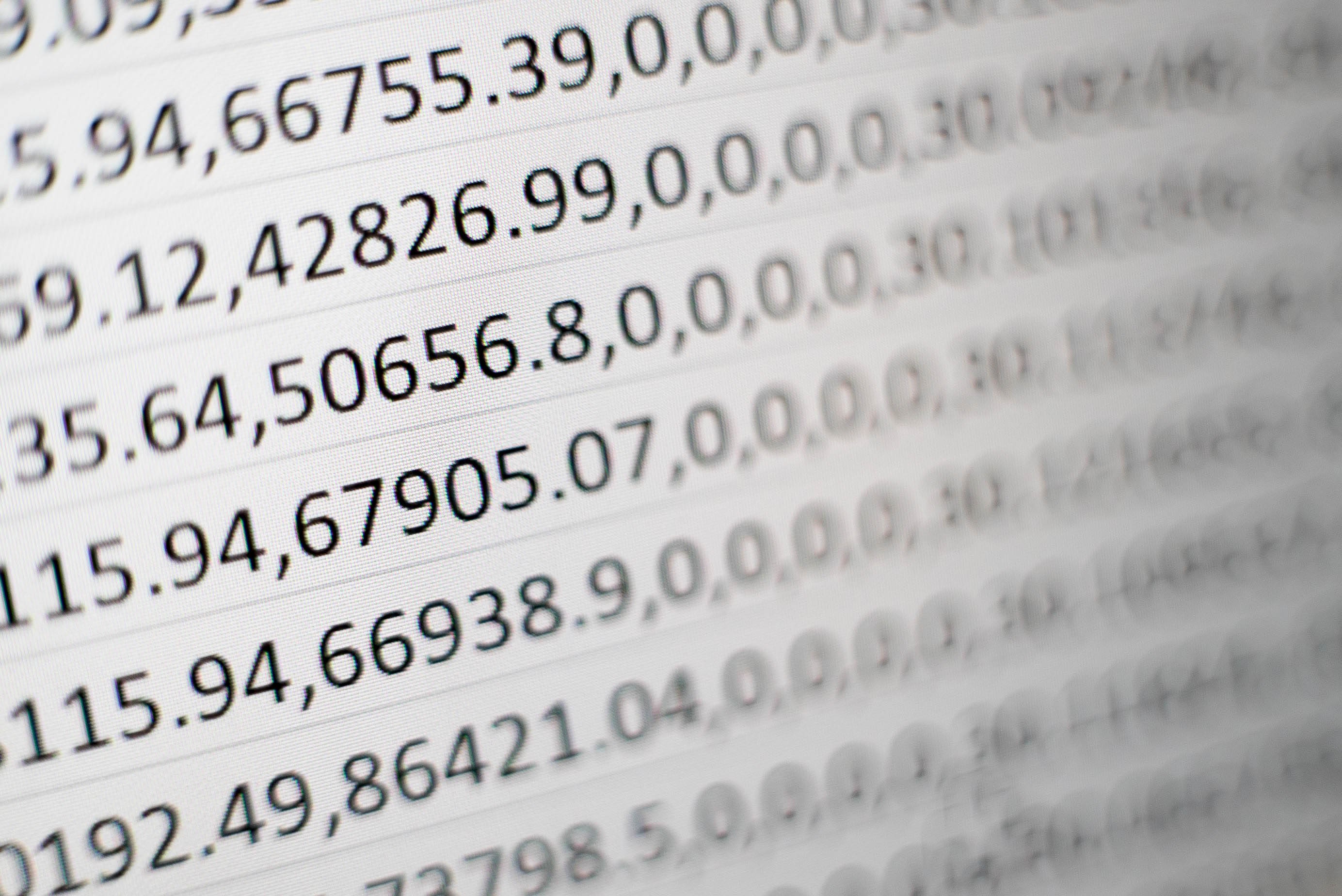

It sharpens how we see the game—pass maps, xG chains, pressure metrics, and more. It gives us language for things coaches have always felt, but couldn’t always explain. And it helps us challenge assumptions—showing us when things aren’t working, even if they look like they are… and sometimes, the opposite. Used well, it brings clarity.

But even the best models have blind spots. Because data reflects what we choose to measure. It captures the visible—but not always the valuable. And without interpretation, it risks being misuderstood.

A chipped pass that breaks two lines but isn’t tagged as a key pass. A defender who shuts down a run without ever touching the ball. A perfectly timed movement in the box that doesn’t lead to a shot—and disappears in the data. A substitution that shifts momentum—not because of tactics, but because of timing, trust, or body language.

These aren’t outliers. They’re the nuance that gives football its depth. And most of them live outside the model.

We saw this most clearly during the COVID seasons. No fans in the stands. No noise. No pressure. And suddenly—home advantage disappeared. Goals per game went up. Yellow cards dropped. The stadium was the same. The players were the same. But the emotion was missing—and the data changed with it.

That’s what I struggle with. Because even though data can help us understand the game, I don’t think it lets us know the game. Not fully. Not emotionally. Not experientially.

I’ve seen coaches base a week’s training plan entirely on match reports and post-game stats. I’ve heard players benched because of numbers they barely understood or because of sprint counts they didn't hit...

But a game isn’t a spreadsheet. And data doesn’t tell you why something happened—it just tells you that it did. Or maybe not even that. Maybe it’s about what the players felt. What they saw. What we didn’t.

Foto de cottonbro studio: https://www.pexels.com/pt-br/foto/pessoa-segurando-uma-ferramenta-manual-preta-e-prateada-6153354/

Foto de cottonbro studio: https://www.pexels.com/pt-br/foto/pessoa-segurando-uma-ferramenta-manual-preta-e-prateada-6153354/

Photo by Bernd 📷 Dittrich on Unsplash

Photo by Bernd 📷 Dittrich on Unsplash

Why I Use AI—and still ask questions

I use AI regularly—to reflect, to test ideas, or, like in this case, to help shape my writing. Not to do it for me—but to challenge it, structure it, refine it.

In many ways, it’s made my thinking sharper. I spend less time wrestling with structure and more time exploring meaning. I can zoom in on a moment—or zoom out and reshape the frame entirely.

But I still feel a bit uneasy sometimes with AI.

Maybe it’s the pace of it all. Maybe it’s how these tools quietly become part of everything. Maybe it’s just how easy it is to stop questioning their suggestions.

Or maybe—just maybe—it’s that childhood fear that the world might really turn into Terminator 2...

I’ve found that the most responsible use of AI isn’t about having it generate answers. It’s about using it to challenge your own. The best moments don’t come from outsourcing judgment. They come from deepening it.

More and more, the conversation around AI is shifting toward agents and automation. And yes—for the boring stuff, sure. Let it run. But that can’t be the end goal.

Because the goal shouldn’t be automation.

It should be clarity.

And if AI helps us think more clearly—without thinking less— that’s a step forward.

Smarter, Still Human: When AI Works—and When It Doesn’t

Across football, AI is no longer a future idea. It’s part of the everyday. From set-piece modeling and injury risk forecasting to opponent scouting and personalised nutrition—data-driven decisions now shape everything from training to transfers.

Take TacticAI, developed by DeepMind with Liverpool FC. It helps coaches plan set pieces by predicting likely outcomes based on different player positions and movements. Not to replace the coach’s instinct—but to offer new ways of seeing.

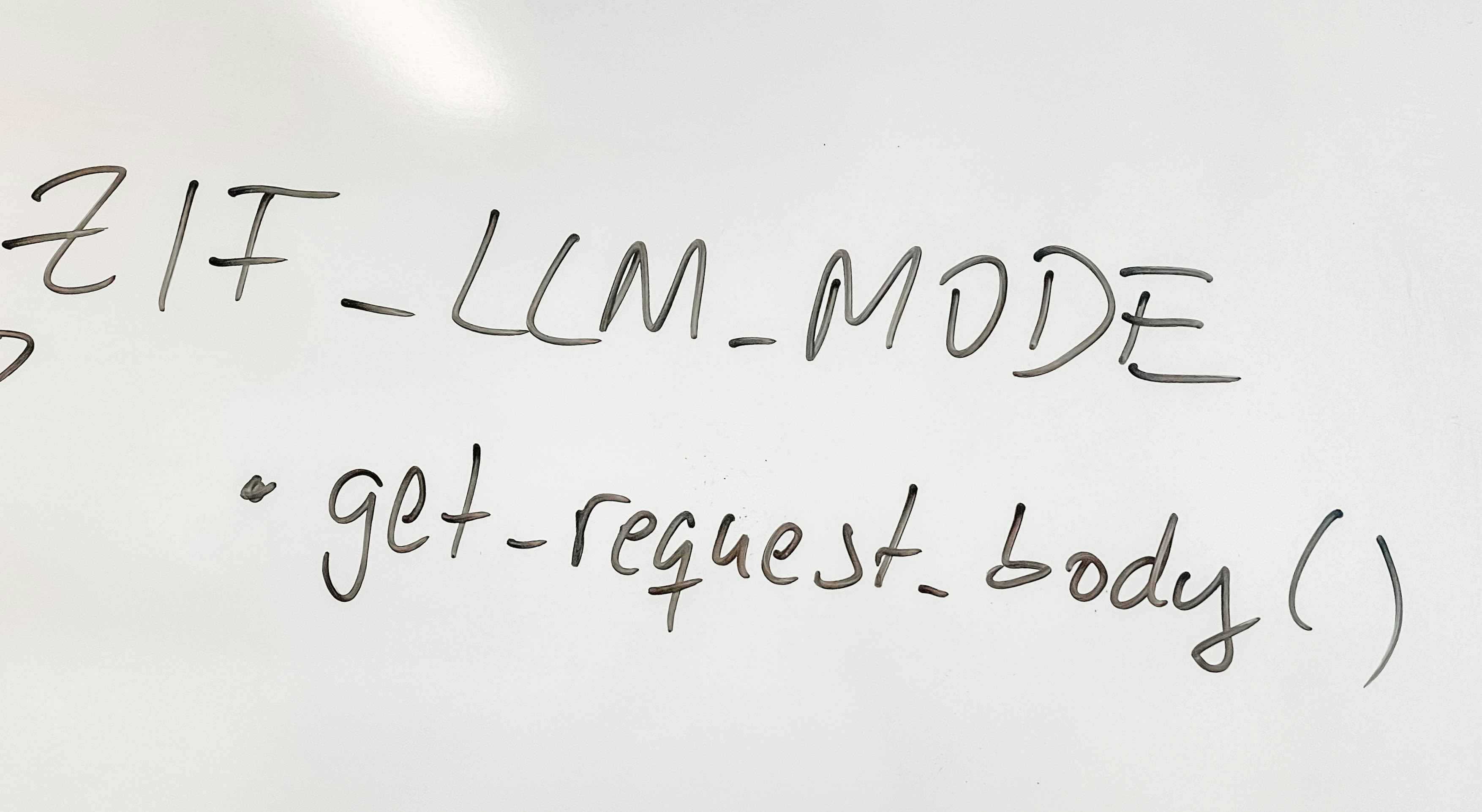

Meanwhile, researchers like Rahimian, Flisar, and Sumpter are experimenting with “wordalisation”—using large language models to turn football data into plain-language feedback. It’s not just about crunching numbers. It’s about making insight actionable for players and coaches on the ground. These tools don’t take the emotion out of football. They try to help reveal the complexity that emotion often hides.

That’s where AI shines: not in replacing judgment—but in expanding it. Not by giving us the answer—but by helping us ask better questions.

Still, innovation isn’t always received the way it’s intended.

In April 2025, broadcaster Max and Twelve Football introduced AIDA, an AI-driven commentator for Sweden’s Allsvenskan. Designed to supplement human experts—not replace them—AIDA delivers real-time analysis rooted entirely in football data. According to Max producer Marcus Sennewald, the goal was to bring “a new angle” to analysis—one that merges AI-generated insights with expert commentary.

But when AIDA made her on-air debut, fans responded almost immediately:

“Soulless.”

“Creepy.”

“Like watching football with a calculator.”

The insights weren’t wrong. But something was missing—rhythm, tension, presence. AIDA could tell us what happened. But it couldn’t tell us how it felt. Max defended the innovation. Football chief Pär Andersson reaffirmed their commitment to progress, reminding critics that AIDA’s analysis was “rooted solely in data” and built to challenge—not replicate—emotionally driven perspectives.

And maybe that’s something to celebrate. Because every leap forward includes risk. And sometimes, the only way to test the line between support and substitution is to cross it.

That’s happening in other industries too. AI models now generate entire advertising campaigns—without hiring a single human model. It’s fast. It’s cost-effective. It works.

But is that what we want from football?

Because in this game, even the most objective moments—goals, fouls, substitutions—carry emotional weight. And when that emotional layer is stripped away, the game doesn’t just feel different.

It feels less alive.

Discomfort Isn’t Always Resistance

It’s easy to dismiss pushback as fear of the future. We’ve heard it before—with self-checkouts, online banking, even video analysis itself. At first, people resist. And then, gradually, it becomes normal.

So maybe AI-led commentary—or even AI-led coaching—will follow the same path. Maybe, over time, we’ll adapt. But maybe that’s exactly why we need to pause.

Because discomfort isn’t always about fear. Sometimes it’s about care. Sometimes it’s your conscience trying to tell you: pay attention.

You can send a player a video analysis clip with time-stamped notes. But it’s not the same as sitting down beside them, watching it together, going through it and reading their reaction in real time. One delivers feedback. The other builds trust.

As Mo Gawdat, former Chief Business Officer at Google X, put it: “The greatest risk of AI isn’t the technology—it’s our morality.”

The real threat isn’t that machines will think for themselves. It’s that we’ll stop thinking for ourselves. Or worse—that we’ll stop thinking about each other.

Conclusion: Only Humans Can Live It

AI can describe football. But only humans can live it.

And maybe—if we build it with care—AI can help us live it better. Not by doing it for us. But by helping us do it with more clarity, more intention, and more connection.

Because football is just one field. But it asks the same questions every other field must face:

- What do we trust?

- What do we value?

- And what are we willing to give up for speed, precision, or scale?

These aren’t questions for systems. They’re questions for us. And sometimes, the answer arrives in the most irrational, illogical, unforgettable way.

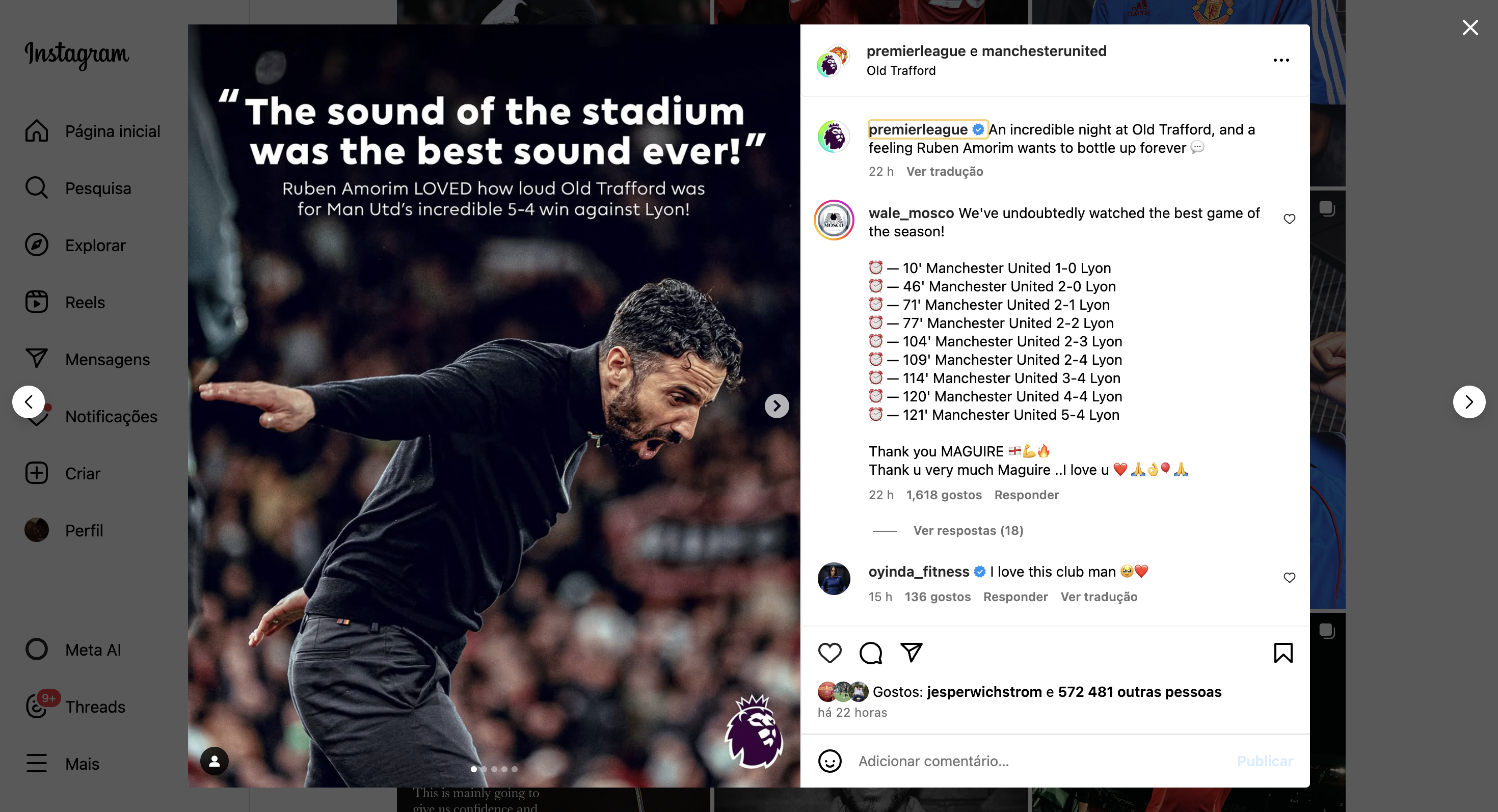

Like the Europa League quarter-final: Manchester United vs Lyon.

A season already far below expectations for a club like United.

Up 2–0.

Then, with one man more… down 2–4.

Fans leaving the stadium—too frustrated to take any more.

And somehow, in the dying minutes, they find a way to win 5–4 in stoppage time.

“Football, bloody hell.”, As Sir Alex Ferguson once said.

No model predicted it.

No data could explain it.

But every fan who stayed felt it.

That’s football. And that’s what’s at stake.

The answers we choose in football… may just show us what kind of society we’re building far beyond it.

References

- DeepMind. (2024). *TacticAI: Assistive AI for football tactics*. https://www.deepmind.com/blog/tacticai

- El Kaliouby, R. (2023–2024). *Pioneers of AI* [Podcast series]. https://www.pioneersof.ai

- European Commission. (2024). *Artificial Intelligence Act*. https://artificialintelligenceact.eu

- Gawdat, M. (2024). *The AI Dilemma*. Interview with Tom Bilyeu on *Impact Theory* [YouTube]. https://www.youtube.com/watch?v=EUjc1WuyPT8

- Google. (2024). *AI Essentials* [Online course]. Grow with Google. https://grow.google/certificates/ai-essentials

- IEEE. (2022). *Ethically Aligned Design: A Vision for Prioritizing Human Wellbeing with Autonomous and Intelligent Systems*. https://ethicsinaction.ieee.org

- Leitner, M. C., & van de Ven, N. (2024). *Home advantage in European football: A mediation and moderation model of the COVID-19 effect*. *International Journal of Sport Policy and Politics*. https://doi.org/10.1080/1750984X.2024.2358491

- Sumpter, D. (2024). *Wordalisation: Making football AI explain itself*. *Soccermatics on Medium*. https://soccermatics.medium.com

- Svensson, L. (2025, April 13). *Broadcasting company Max stands by its AI football analyst Aida amid social media backlash*. VIASPORT. https://viasport.se/articles/aida-debut-max-defends-innovation

- Eurosport. (2025, April 4). *Max skriver historie – «ansetter» AI-ekspert i Allsvenskan*. *Eurosport Norge*. https://www.eurosport.no/fotball/allsvenskan/2025/max-skriver-historie-ansetter-ai-ekspert-i-allsvenskan_sto9619250/story.shtml